5 Common AI Policy Mistakes (And How to Avoid Them)

Many organizations rush to create AI policies without addressing critical gaps. Learn the most common mistakes and practical solutions.

5 Common AI Policy Mistakes (And How to Avoid Them)

Many organizations rush to create AI policies in response to the EU AI Act without addressing critical gaps. These policies often sound comprehensive on paper but fail in practice. Here are the five most common mistakes and practical solutions.

Mistake 1: Being Too Vague or Generic

The Problem

Many AI policies read like compliance documents written by lawyers for lawyers. They include statements like:

- "AI shall be used responsibly"

- "Employees must follow best practices"

- "AI systems will comply with applicable regulations"

While technically true, these provide no actionable guidance. Employees don't know what "responsible use" means in practice. When faced with real decisions – Can I upload this customer data? Should I use AI-generated code? – vague policies offer no help.

Why It Happens

Organizations copy templates without customization, prioritize legal defensibility over usability, or rush policy creation to check a compliance box without thinking through actual use cases.

The Solution

Make it Specific and Actionable

Instead of generic statements, provide clear rules:

Vague: "Use AI tools appropriately"

Better:

- "Do not upload customer data, source code, or confidential business information to public AI tools like ChatGPT"

- "AI-generated content must be reviewed by a qualified employee before publication"

- "AI tools for hiring must be approved by HR and Legal before deployment"

Include Real Examples

Add scenarios employees will actually encounter:

Example 1: Using AI for Content Creation

- ✅ DO: Use AI to draft a first version of marketing copy, then edit and verify

- ❌ DON'T: Publish AI-generated content without human review

- ❌ DON'T: Use AI to generate content about regulated topics (medical, legal, financial) without expert review

Example 2: Customer Service AI

- ✅ DO: Inform customers they're interacting with an AI chatbot

- ✅ DO: Provide easy escalation to human support

- ❌ DON'T: Use AI for sensitive customer complaints without human oversight

Define Key Terms

Don't assume everyone knows what you mean by "AI" or "sensitive data." Provide clear definitions and examples.

Mistake 2: Ignoring Data Protection and Privacy

The Problem

Organizations create AI policies that don't address how personal data flows through AI systems. They forget that the GDPR doesn't pause just because you're using AI – in fact, AI raises additional privacy concerns.

Common oversights include:

- No guidance on what data can be input to AI tools

- Unclear data retention practices for AI-processed information

- Missing consent or legal basis for AI processing

- No consideration of individual rights (access, erasure, objection to automated decisions)

Why It Happens

AI policy and data protection policy are often owned by different teams. The AI policy focuses on the technology while ignoring that most AI systems process personal data, which triggers GDPR obligations.

The Solution

Integrate GDPR Requirements

Your AI policy must address:

1. Legal Basis for Processing

- Identify lawful basis (consent, contract, legitimate interests) for each AI use case

- Document why AI processing is necessary and proportionate

- Update privacy notices to explain AI processing

2. Data Minimization

- Only use personal data necessary for the AI's purpose

- Anonymize or pseudonymize data where possible

- Remove unnecessary data before AI processing

3. Data Subject Rights

- Provide meaningful information about AI decision-making

- Enable individuals to obtain human review of automated decisions

- Respect rights to object or request deletion

4. Data Protection Impact Assessments

- Require DPIAs before deploying AI that involves:

- Large-scale processing of sensitive data

- Automated decision-making with significant effects

- Systematic monitoring

- Profiling

- Document and mitigate identified risks

5. Vendor Data Processing

- Ensure GDPR-compliant data processing agreements with AI vendors

- Verify vendors' data security and privacy practices

- Clarify data location, retention, and deletion procedures

Create Clear Data Handling Rules

Example Rules:

- "Before using AI to process personal data, confirm you have a valid legal basis and the processing is documented in our data inventory"

- "Customer data from our CRM may not be exported to AI tools without Data Protection Officer approval"

- "AI-generated insights about individuals must be reviewable by those individuals upon request"

Mistake 3: No Training or Awareness Program

The Problem

Organizations write comprehensive AI policies, then file them away. Employees never read them, don't understand them, or don't know they exist. When employees make AI-related decisions, they rely on intuition rather than policy.

The result: Well-intentioned employees violate policies they don't know about, creating legal and operational risks.

Why It Happens

Organizations treat policy creation as the endpoint rather than the beginning. They underestimate the change management needed to shift employee behavior around AI use.

The Solution

Implement AI Literacy Training

The EU AI Act explicitly requires AI literacy for anyone working with AI systems. Your training should cover:

Foundational Concepts:

- What is AI and how does it work (high-level)

- Benefits and limitations of AI

- Common failure modes and biases

- Why AI governance matters

Your Organization's Approach:

- Your specific AI policy and where to find it

- Approved vs. prohibited AI uses

- How to request approval for new AI tools

- Who to contact with questions

Practical Skills:

- How to use approved AI tools correctly

- How to identify potential AI risks

- How to report AI issues or incidents

- How to review and verify AI outputs

Make Training Engaging and Relevant

Bad Training: Generic e-learning module about AI ethics with no connection to actual work

Good Training:

- Role-specific scenarios (different training for sales, engineering, HR, customer service)

- Interactive elements and real examples from your organization

- Short, focused sessions (not one massive course)

- Regular refreshers, not just one-time onboarding

Create Ongoing Awareness

Beyond formal training:

- Quick Reference Guides: One-page cheat sheets for common scenarios

- Regular Updates: Email newsletter or Slack channel highlighting AI policy reminders

- Success Stories: Share examples of correct AI use

- Easy Access: Policy and guidance available on internal wiki, not buried in document management system

Measure Understanding

- Require training completion and track it

- Include knowledge checks or quizzes

- Gather feedback on confusing areas

- Update training based on actual questions employees ask

Mistake 4: Lack of Enforcement and Accountability

The Problem

The policy says "AI must be approved before use" but there's no approval process. It prohibits certain uses but there's no way to detect violations. It assigns responsibilities but no one is actually accountable.

Without enforcement mechanisms, your policy is just aspirational – not operational.

Why It Happens

Organizations focus on documenting "what" should happen without designing "how" it will be enforced. They assume policies self-execute through employee goodwill.

The Solution

Assign Clear Ownership

Every AI governance task needs an owner:

AI Governance Committee/Board:

- Reviews and approves new AI use cases

- Makes high-risk AI deployment decisions

- Oversees policy compliance

- Escalates serious issues

AI Officer / Designated Role:

- Day-to-day policy interpretation

- Approval workflow management

- Incident response coordination

- Training program oversight

Department Leads:

- Ensure their teams follow policy

- Review AI use in their area

- Report issues upward

Individual Employees:

- Follow policy in daily work

- Complete required training

- Report concerns or violations

Implement Technical Controls

Where possible, enforce policy through technology:

- Access Controls: Restrict who can use which AI tools

- Data Loss Prevention: Block sensitive data from reaching prohibited AI endpoints

- Logging and Monitoring: Track AI tool usage

- Approval Workflows: Require documented approval for new AI deployments

Create Accountability Mechanisms

Pre-Deployment:

- New AI tools require written approval

- High-risk AI requires impact assessment

- Documented review before procurement

Ongoing:

- Regular audits of AI use vs. policy

- Spot checks of AI outputs

- Employee attestations of policy compliance

- Vendor compliance reviews

Post-Incident:

- Incident investigation process

- Root cause analysis

- Corrective action plans

- Consequences for violations (progressive discipline)

Measure and Report

Track metrics like:

- AI tools in use (authorized vs. shadow IT)

- Training completion rates

- Policy violations identified and resolved

- Incident frequency and severity

- Time to resolve AI issues

Report these to leadership regularly to maintain accountability at all levels.

Mistake 5: Creating a Static Policy Instead of a Living Document

The Problem

Organizations create an AI policy in 2025 to address the AI Act, then never update it. Meanwhile:

- New AI tools emerge constantly

- Regulations evolve (guidance, standards, enforcement decisions)

- Your organization's AI uses change

- New risks are discovered

A static policy quickly becomes outdated and irrelevant.

Why It Happens

Policies are seen as "set it and forget it" compliance artifacts. Updating policies is painful (legal review, approvals, redistribution), so organizations avoid it.

The Solution

Build in Regular Reviews

Schedule policy reviews:

- Quarterly Light Review: Quick check for obvious gaps or updates needed

- Annual Comprehensive Review: Full assessment against current AI use, regulatory changes, and industry best practices

- Triggered Reviews: Update policy when:

- New AI Act guidance is published

- You deploy significant new AI capabilities

- An AI-related incident reveals policy gaps

- Major organizational changes affect AI governance

Create Modular Policy Structure

Make updates easier with a modular approach:

Core Policy (changes rarely):

- Overarching principles

- Governance structure

- Key definitions

Specific Guidelines (updated frequently):

- Approved tools list

- Use case examples

- Departmental procedures

- Technical requirements

Annexes (constantly evolving):

- AI system inventory

- Vendor assessments

- Training materials

- Forms and templates

This way you can update the frequently-changing parts without full policy revision.

Maintain Version Control

- Date every policy version

- Document what changed and why

- Archive old versions

- Communicate changes to affected employees

- Re-train when significant updates occur

Gather Continuous Feedback

Create channels for employees to:

- Ask questions about policy interpretation

- Report unclear or impractical requirements

- Suggest improvements based on real-world use

This feedback reveals where policy doesn't match reality.

Stay Informed

Monitor:

- EU AI Act implementing acts and guidelines

- Enforcement decisions and case law

- Industry standards and codes of conduct

- Security vulnerabilities in AI systems

- Best practices from other organizations

Assign someone to track these and trigger policy reviews when relevant changes occur.

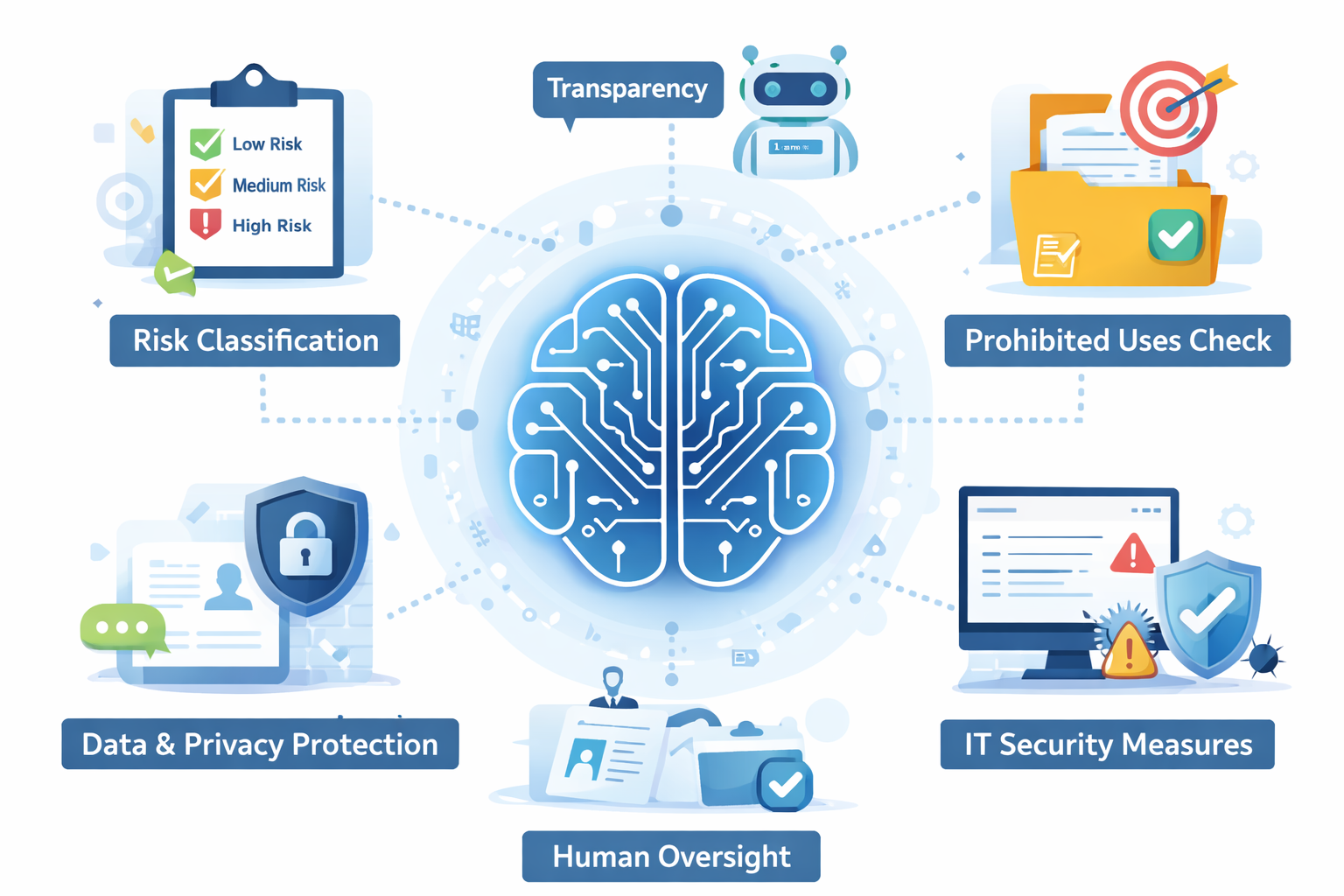

Putting It All Together: The Effective AI Policy

An effective AI policy is:

- Specific: Clear rules and examples, not vague principles

- Integrated: Aligned with GDPR, security, and other policies

- Understood: Backed by training and ongoing awareness

- Enforced: With clear ownership, technical controls, and accountability

- Dynamic: Regularly reviewed and updated

Quick Assessment: Is Your AI Policy Effective?

Ask yourself:

- Could an employee read your policy and know what to do in specific situations?

- Does your policy address personal data handling in AI?

- Have all employees using AI completed training on your policy?

- Can you detect and respond to policy violations?

- When did you last update your policy?

If you answered "no" or "I don't know" to any question, you likely have one of these five common mistakes.

Next Steps

- Audit your current policy against these five mistakes

- Prioritize fixes based on your highest risks

- Engage stakeholders (Legal, IT, Privacy, HR, business units)

- Update incrementally – you don't need to fix everything at once

- Measure effectiveness – track whether behavior actually changes

Remember: the goal isn't a perfect policy document. The goal is an effective AI governance system that enables safe, compliant, and valuable use of AI in your organization. The policy is just one tool toward that end.

Quick Actions

Common Misunderstandings

More articles in this category

Recent Articles

Latest from our guidance

Featured Articles

Hand-picked for you

AI Policy Template: What Every Section Should Include

Regulatory UpdatesUnderstanding the €35M Penalty: What Triggers High Fines Under the EU AI Act

Core Policy GuidanceTop 10 Actions for EU AI Act Compliance

Common MisunderstandingsProvider vs Deployer: Which AI Act Role Are You?

Ready to Take the Next Step?

Get the comprehensive guide or generate a customized AI policy for your organization.

Both resources are designed specifically for mid-sized EU companies navigating AI governance.