Provider vs Deployer: Which AI Act Role Are You?

The AI Act assigns different obligations based on your role. Understanding whether you're a "provider" or "deployer" determines what compliance steps you must take.

Provider vs Deployer: Which AI Act Role Are You?

One of the most fundamental questions for AI Act compliance is determining your role in the AI value chain. The obligations you face depend entirely on whether you're classified as a "provider," "deployer," or another role. Getting this wrong means missing critical compliance requirements.

Understanding the Roles

Provider Definition

A provider is an organization that:

- Develops an AI system

- Has an AI system developed and places it on the market or puts it into service under its own name or trademark

- Makes substantial modifications to an AI system that changes its intended purpose

Key Point: Providers bear the primary responsibility for ensuring AI systems meet technical requirements, especially for high-risk systems.

Deployer Definition

A deployer is an organization that:

- Uses an AI system under its own authority

- Does not place the system on the market or put it into service for others

- Uses the AI system for its intended purpose within its own operations

Key Point: Deployers must use AI responsibly according to instructions, monitor its performance, and ensure appropriate human oversight.

Why This Distinction Matters

The provider/deployer distinction determines:

- Compliance burden: Providers face more extensive technical obligations

- Liability exposure: Different responsibilities mean different risk profiles

- Documentation requirements: Providers create documentation; deployers maintain it

- Resource needs: Provider obligations typically require more technical expertise

Common Scenarios and Role Classification

Scenario 1: SaaS AI Tool for Internal Use

Situation: Your company subscribes to an AI-powered customer service platform.

Your Role: Deployer

- You're using a third-party system as intended

- The SaaS vendor is the provider

- You must follow their instructions and monitor performance

Your Obligations:

- Use the system according to provider instructions

- Conduct impact assessments if processing personal data

- Ensure human oversight where required

- Monitor for adverse outcomes

- Report serious incidents to the provider

Scenario 2: Custom AI Development for Your Business

Situation: You hire developers to build a custom AI system exclusively for your company's operations.

Your Role: Provider

- You commissioned the development

- The system operates under your responsibility

- You control its design and deployment

Your Obligations:

- Meet all provider requirements for your system's risk category

- Conduct conformity assessments if high-risk

- Maintain technical documentation

- Ensure data quality and governance

- Implement risk management systems

Scenario 3: AI-Powered Product Sold to Customers

Situation: Your company sells software with embedded AI functionality to end customers.

Your Role: Provider (for the product you sell)

Also: Deployer (if you use third-party AI tools internally)

Your Obligations:

- Full provider responsibilities for your product's AI

- Must ensure CE marking if high-risk

- Provide instructions and documentation to customers

- Separate deployer obligations for any AI you use internally

Scenario 4: White-Label AI Solution

Situation: You resell an AI system under your own brand name without modifying it.

Your Role: Provider

- Placing it on the market under your name makes you the provider

- Original developer may still have obligations, but you assume provider role

- This applies even if you don't modify the technology

Your Obligations:

- Full provider compliance responsibilities

- Ensure the underlying system meets requirements

- Maintain all required documentation

- May need contractual protection from original developer

Scenario 5: Modifying Third-Party AI

Situation: You significantly modify a third-party AI system's intended purpose.

Your Role: Can become Provider

- Substantial modifications that change intended purpose make you a provider

- Minor configurations or customizations don't trigger provider status

- The line between "modification" and "use" can be unclear

Your Obligations:

- If substantial modification: full provider obligations

- If minor customization: remain a deployer

Detailed Provider Obligations

For All AI Systems:

- Quality Management: Implement systems for compliance monitoring

- Documentation: Maintain technical documentation demonstrating compliance

- Record-Keeping: Keep logs of system operation (for high-risk systems)

- Transparency: Provide clear information to deployers and users

- Post-Market Monitoring: Track performance after deployment

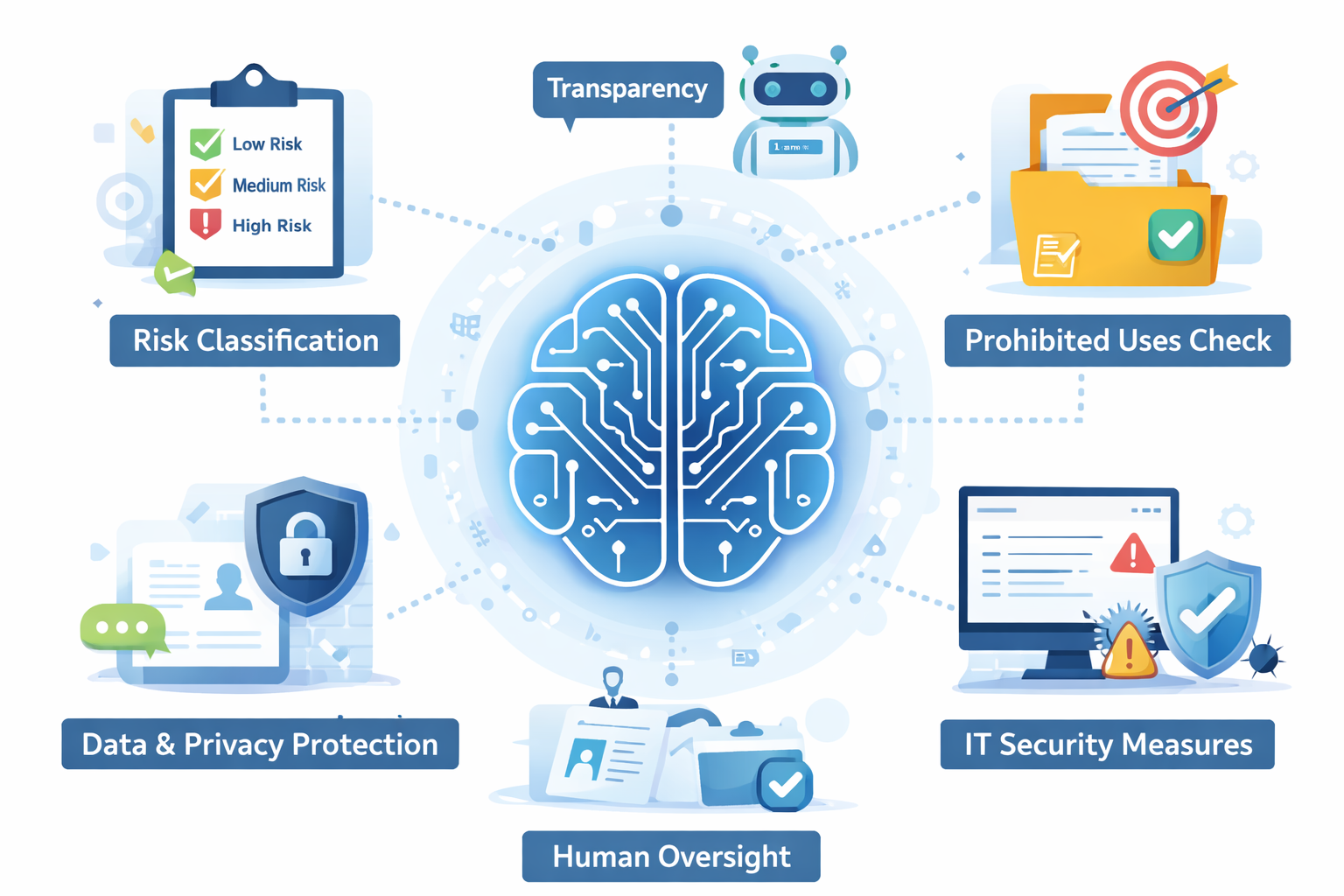

Additional Requirements for High-Risk Systems:

- Risk Management: Continuous identification and mitigation of risks

- Data Governance: Ensure training data quality and relevance

- Technical Documentation: Comprehensive description of system design and operation

- Automatic Logging: Design systems to log events automatically

- Human Oversight: Enable meaningful human supervision

- Accuracy & Robustness: Ensure appropriate performance levels

- Cybersecurity: Implement resilience against attempts to alter use

- Conformity Assessment: Undergo assessment before market placement

- CE Marking: Affix marking to compliant high-risk systems

- Registration: Register high-risk systems in EU database

Detailed Deployer Obligations

For All AI Systems:

- Follow Instructions: Use system according to provider's directions

- Input Data: Ensure input data is relevant for intended purpose

- Monitor: Oversee AI system operation based on instructions

- Record-Keeping: Keep logs as specified by provider (high-risk systems)

Additional Requirements for High-Risk Systems:

- Human Oversight: Assign qualified individuals to supervise the system

- Impact Assessment: Conduct fundamental rights impact assessment before use

- Incident Reporting: Report serious incidents to provider and authorities

- Data Protection: Comply with GDPR, including DPIAs where required

- Suspend/Discontinue: Stop using system if non-conformity or risk identified

- Cooperation: Work with authorities on compliance matters

Deployer-Specific High-Risk Categories:

Deployers face additional obligations when using AI for:

- Employment decisions (hiring, promotion, termination)

- Access to education and vocational training

- Access to essential private services and public assistance

- Evaluating creditworthiness

- Assessing risk in life/health insurance pricing

The Gray Areas: When Classification Is Unclear

Integration and Customization

Adding AI as a component in a larger system can create ambiguity:

- Simply integrating third-party AI: Usually a deployer

- Extensively customizing AI behavior: May become a provider

- Creating new functionality: Likely a provider

Open-Source AI Models

Using open-source models raises questions:

- Downloading and using as-is: Typically a deployer

- Fine-tuning for specific purposes: Gray area, depends on extent

- Distributing modified version: Likely a provider

AI-as-a-Service with Customization

Cloud AI services with significant customization options:

- Using standard API: Deployer

- Extensive model training/customization: May shift toward provider

- Threshold depends on how much you control system behavior

How to Determine Your Role: Decision Framework

Step 1: Ask Core Questions

- Did we develop this AI system or have it developed for us?

- Do we place this system on the market for others?

- Do we use this under our own authority for our operations?

- Did we substantially modify an existing system?

Step 2: Apply the Tests

- Development Test: If you controlled development → Provider

- Market Test: If you sell/distribute to others → Provider

- Authority Test: If you use for own operations only → Deployer

- Modification Test: If you changed intended purpose → Provider

Step 3: Document Your Analysis

Record your reasoning because:

- Authorities may question your classification

- It guides your compliance approach

- It clarifies vendor relationships

- It helps allocate resources

Multiple Roles Simultaneously

Many organizations will be both providers and deployers:

Example: A company that:

- Develops an AI-powered SaaS product (Provider for this)

- Uses third-party AI for internal HR (Deployer for this)

- Purchases AI analytics tools (Deployer for these)

Each use case requires separate role analysis and corresponding compliance measures.

Practical Implications for Compliance

If You're a Provider:

- Budget significantly for compliance, especially if offering high-risk systems

- Invest in technical expertise for conformity assessments

- Expect longer timelines before market launch

- Plan for ongoing obligations even after system deployment

- Consider insurance for provider liability

If You're a Deployer:

- Vet your AI vendors carefully – their compliance affects you

- Maintain operational documentation of how you use systems

- Implement monitoring processes for AI performance

- Train personnel on proper AI use and oversight

- Prepare impact assessments before high-risk deployments

Contractual Considerations

Provider-Deployer Contracts Should Address:

- Role Clarity: Explicit statement of who is provider vs. deployer

- Compliance Responsibilities: Clear allocation of obligations

- Information Sharing: Provider's duty to supply documentation

- Incident Procedures: How serious incidents are reported and handled

- Modification Rights: What changes deployer can make without becoming provider

- Liability Allocation: Who bears risk for compliance failures

- Audit Rights: Deployer's ability to verify provider compliance

Common Mistakes to Avoid

Mistake 1: Assuming "Just Using AI" Means No Obligations

Even deployers have significant responsibilities, especially for high-risk systems.

Mistake 2: Not Recognizing Provider Status

Organizations that customize AI extensively may inadvertently become providers with much greater obligations.

Mistake 3: Relying Solely on Vendor Claims

A vendor saying "we handle compliance" doesn't eliminate your obligations as a deployer.

Mistake 4: Ignoring Role Shifts

Your role can change over time – regular reassessment is necessary.

Key Takeaways

-

Classification is fundamental: Everything else in your compliance program depends on correctly identifying your role.

-

Most organizations are deployers: Using third-party AI tools makes you a deployer, which still carries obligations.

-

Providers face heavier burdens: Development and market placement trigger extensive technical requirements.

-

You can be both: Many organizations have different roles for different AI systems.

-

Document your analysis: Record why you classified each system as you did.

-

Review regularly: Roles can shift as systems evolve or uses change.

-

Contracts matter: Clear agreements with vendors protect everyone and clarify responsibilities.

Understanding your role isn't just a compliance checkbox – it's the foundation of your entire AI governance approach. Get this right, and everything else falls into place more easily.

Quick Actions

Common Misunderstandings

More articles in this category

Recent Articles

Latest from our guidance

Featured Articles

Hand-picked for you

AI Policy Template: What Every Section Should Include

Core Policy GuidanceTop 10 Actions for EU AI Act Compliance

Regulatory UpdatesUnderstanding the €35M Penalty: What Triggers High Fines Under the EU AI Act

Common Misunderstandings5 Common AI Policy Mistakes (And How to Avoid Them)

Ready to Take the Next Step?

Get the comprehensive guide or generate a customized AI policy for your organization.

Both resources are designed specifically for mid-sized EU companies navigating AI governance.